Scratching the Surface: What’s Really Powering Large Language models

You type your prompt into ChatGPT, Claude, Grok or Gemini and hit enter. Behind the scenes, an enormous brain hums into life. Entirely mimicking your thought processes with the power of a thousand humans, the brain starts researching, bringing together facts from many of its own giant databases, checking the web, rechecking what it’s about to display, before presenting a confident, consistent, factual explanation of what you were looking for. No need to check the output, this is AI! It’s smarter than you!

This, however, isn’t what’s happening.

Every time you type a prompt and see those blinking dots, there’s no “thinking” or “understanding”. It’s relentless guessing, one word at a time, stitched together so quickly that it looks like fluency. It’s hard to tell if we should marvel at the fluency, or be wary of the illusion it creates.

Strip away the branding and a large language model (LLM) is a probability engine. Feed it some text and it calculates which word is most likely to come next, based on patterns absorbed from oceans of past text. Pick one, add it, repeat.

The underlying mathematics is intricate, beyond what most of us need day to day, but the essence is simple: it’s not reasoning, it’s predicting. What you’re really seeing isn’t truth emerging, it’s statistical plausibility dressed up as prose.

Sound familiar? You likely have been using a tiny version of this every day for decades.

When you type a text message, your phone’s autocomplete suggests the next word. It learns your specific language preferences and uses them to suggest what three words you most likely would choose to come next. Handy for fixing typos; disastrous if you let it write entire sentences. Here’s what happened when I created a message solely from my phone’s suggestions:

What is going on here?

The purpose behind the predictive text model on your phone is to make it easier for you (as a human) to type messages more quickly, especially when spelling difficult words or typing long messages. My initial choices were based on my most frequently typed words (I must talk about myself a lot in my messages!). Whilst some of the existing words in my message helped the decision making process, the predicted words largely came from information that is kept about me, personally.

In contrast, when using a commercial LLM, the purpose is to:

i) use as many words from the conversation in the prediction as possible, normally the entire conversation so far (known as a context window)

ii) predict the next word based on all available training data, including text which isn’t “yours”

This is useful for giving next word predictions within the context of your prompt, and further allowing for an enormous amount of information to be pulled into the answer. But when we use these commercial LLMs, we never have to pick the next word. So what’s happening?

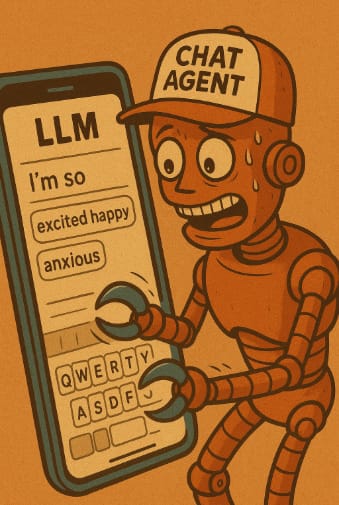

Here’s the part most people miss: you never interact with the raw model, you interact with a chat agent. You type in your prompt and the chat agent takes it from there. It puts your entire prompt into the LLM, receives a list of words which it can choose from and picks for you. In order to select the next word, the chat agent then considers the entire text so far. What you see is text being “written” out. What’s happening underneath is the agent carefully coordinating each word, over and over, until the answer is complete

What you don’t see are the choices being made behind the scenes. Every response you get from a LLM is shaped by a handful of settings and trade-offs. Parameters such as temperature decide how “creative” the model is in picking words. Programmatically, it’s also possible to vary things such as how much of the conversation it can “remember”, length of the answer, tone and guardrails it should follow, the LLM version used, or when to stop writing. Furthermore, some requests require the agent to reach out to other agents to fetch or create extra information. For example, if you ask it to generate an image, it may hand the task off to an image generator in conjunction with using the LLM to describe to the image generator what to do.

While these parameters can be adjusted in principle, commercial models arrive with the defaults locked in, meaning your experience is shaped as much by the provider’s choices as by your own.

Next time you press enter on a prompt and see those dots blinking, picture a tin-can robot hammering autocomplete, fast enough to look like fluency but still just predicting. Whilst it’s useful to think of an LLM as one source answer for all of your questions, in reality there are many other things going on in the background. This is obviously a simplification, but it should also start to embed the idea that LLMs aren’t the answer for everything. LLMs are brilliant at some things like drafting text, summarising, even sketching bits of code. They’re dreadful at others like reasoning, sticking to facts, planning ahead. The catch is they sound equally confident at both. This confident delivery, even when wrong, is what makes them such convincing, albeit unintentional, 'fluent liars.'

The real challenge isn’t spotting where LLMs stumble. It’s resisting the fluency that makes failure look like competence. Over the next few posts, we’ll dig into where LLMs truly add leverage and where that fluency only creates false confidence.

—----------------------

About Larry

I’m a lifelong technologist with a passion for problem solving. I have over a decade of trading experience and a decade of technical experience within financial institutions, where I’ve started, grown and run very profitable businesses. I’ve seen many successful and unsuccessful projects in my time, especially in the banking world. My hobbies include Brazilian Jiu-Jitsu, Climbing and Cryptic Crossword solving

About Ash

I am a strategy and operations professional with 14 years of financial services experience, driven by a passion for technology. I have led teams and projects from full technology builds through to client-facing strategy. What drives me is seeing how people, processes, data and ideas connect to create solutions that work in practice. Outside of work, I am usually exploring food, cocktails and practicing yoga.